When AI Risks Making Nonprofits Less Effective

A Recent MIT Study Calls for Caution

Short essay this week as I’m taking a quick break from keeping my 3-year-old out of the lake on a family trip. Vacations no longer exist, just ‘parenting in a new location.’

Still, parenting in a new location is a nice change.

On the flight here, I listened to a podcast conversation between Cal Newport (Deep Work) and Brad Stulberg (Master of Change), on the results of a recent MIT study on the impacts of Generative AI usage. This study tracked a cohort of essay writers using ChatGPT at various levels. Three outcomes stood out:

Those who used Gen-AI the most when writing saw 47% less brain activity than those who didn’t use it at all. Maybe an obvious, but still interesting confirmation that we truly are outsourcing our cognitive effort to ChatGPT and Claude.

AI writing in the study was reviewed and consistently described as ‘soulless, empty, lacking individuality, typical.’ Hardly a vote of confidence in quality.

Those who used ChatGPT heavily in their writing for four months and then had it taken away from them wrote worse than those who had never used ChatGPT before.

That last point suggests that skill atrophy is a big risk that needs to be considered when adopting AI tools. A question that Cal and Brad discuss at length is, ‘If a core skill is like a muscle that we’ve developed through some long, hard training, what happens when we stop using it because we’ve outsourced it?’

This question has implications individually and corporately for the nonprofit sector as we plan for AI adoption.

We’re all riding a wave of marketing from AI tech companies driving towards product adoption that’s essentially saying ‘if you don’t outsource as much of your work to these tools as possible, you’re going to get left behind and become irrelevant.’

Before we buy that message, it’s worth considering what we stand to lose by outsourcing the core functions of our work. Nonprofit teams are primarily staffed by knowledge workers who create value through their ideas rather than their physical labor, so when I say core functions I’m thinking about areas like strategy, idea generation, writing, and collaborating.

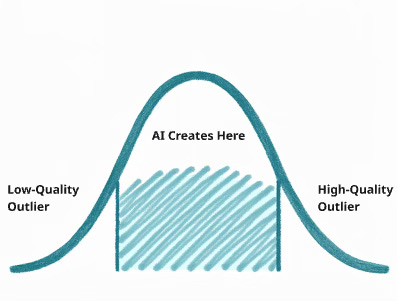

I find it helpful to visualize Gen-AI as a standard distribution curve. At a very simplistic level, a model is trying to create a response to the input it’s given that falls close to the median of what it sees in its training data.

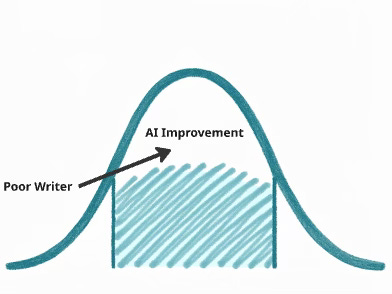

So one way to look at what a Gen-AI tool like ChatGPT is doing when we ask it to write on our behalf is: If someone is a below-average writer, ChatGPT might level up their writing to something closer to average. The tool might help that person output better writing, but the risk is that they won’t develop the skill themselves. They’ll be stuck using AI tools to maintain their level of writing quality, and they might hit a ceiling at whatever the model considers ‘average.’

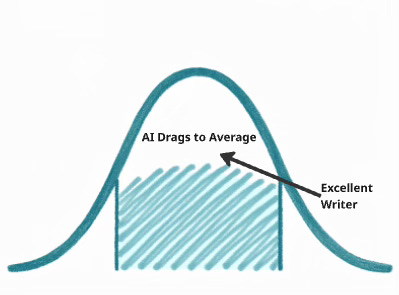

The risks are different for an excellent writer. Outsourcing the core task of writing to a Gen-AI tool risks dragging this person’s quality down to average, since it’s impossible for a model to understand the set of experiences that a good writer draws from to create a unique voice and perspective that stands out in the marketplace.

Jason Fried (37Signals / Basecamp) calls AI-generated writing, ‘writing in brown.’ Shreyas Doshi (product advisor) advises against using AI in strategy documents for similar reasons.

The point is, if writing or strategy is one of your core skills that enables you to make your living, outsourcing that core skill to AI presents risks. Either we don’t develop the skills we otherwise could have or we drag ourselves from being excellent to average just to save some mental strain.

The same risks extend from individual capability to corporate capability. In the nonprofit world, let’s imagine an extreme example of a high-performing fundraising team that makes an aggressive decision to outsource as much of its core function to Gen-AI tools as possible.

Major donor account strategy? Outsourced.

Proposal writing? Outsourced.

Impact reporting? Outsourced.

I’m considering ‘outsourced’ here as the difference between asking ChatGPT ‘write this donor account strategy for me’ instead of ‘analyze this donor’s giving history so I can write this account strategy myself.’

The gift officers I work with are exceptional, and the level of relational capital they have with their donors is hard-won. It’s also very hard work, so I can see the temptation to say ‘ChatGPT - analyze this donor data set and write me a strategy for this next conversation.’ But the MIT study suggests we should be cautious of two things in this scenario:

Quality, and by extension the revenue impact, risks being dragged from exceptional to average. This has real implications in the short- to mid-term.

Perhaps a greater risk is that, once the organization realizes the AI replacements for their gift officers are lagging, leaders may find that this corporate skill set is degraded and needs to be rebuilt. It can’t simply be turned back on. Just like the writers who had ChatGPT taken away from them were then worse writers than when they started, a gift officer who outsources donor account strategy and then tries to re-engage might find that they need to re-train that skill set to get back to their former level of excellence.

I don’t know of any teams that are moving to fully outsource their core functions to AI agents at this point. But much of the messaging from the tech world is that we should be thinking this way. This MIT study might be a leading indicator that there are real consequences to these AI-outsourcing choices that might be harder to reverse than we expect.

Here’s how I’m summarizing all of this for my decision-making when I’m thinking about AI adoption:

Ask whether something is a core skill that I want to maintain for myself or a team, regardless of how good an AI model gets.

Ask where the line is between augmenting that core skill (i.e. using ChatGPT as a research assistant or data analyst, but ensuring a human still does the core strategy or writing work), and outsourcing it (asking ChatGPT to generate the final output, whether that’s an essay, a strategy document, etc.).

I’m not an anti-AI Luddite by any means. I use these tools daily in my own work. If we free ourselves from drudgery so that we can each focus on our highest-value skills as individuals and teams, that’s a win. But I’m increasingly concerned that the line between augmenting core skills and outsourcing core skills is blurred, with no guidance from leaders on best practices.

Human intuition and expertise still matter at this stage, and we outsource that intuition at our peril. Maybe these models continue improving at a rate where this discussion becomes a moot point, but if they don’t and they continue to merely generate an average set of responses, we need to be cautious and acknowledge what we stand to lose.

On a positive note, here are two Gen-AI use cases that I believe will be transformative and empowering for the nonprofit sector overall:

Data access. Gen-AI is very good at analyzing big data sets and summarizing key takeaways. This means people who don’t speak data (SQL, Excel formulas, etc.) will be increasingly able to access insights without wading through the bottleneck of Business Intelligence teams. One example from my work: Leveraging an AI-powered workflow to analyze data from a variety of platforms, previously not accessible to everyone, and generating account-level engagement histories for major donors. This will enable gift officers to operate in their core function of serving donors relationally rather than spending time sifting through pages of hard-to-read data.

Building bespoke software tools. Gen-AI is quite good at writing code, and my bet is that this stands to save nonprofits big money on software subscriptions. Instead of paying a full subscription fee for a software service when a team only uses one or two features out of the thousands offered, they might build their own custom solutions using Lovable, Replit, Bolt, or V0 to solve specific problems. A prime candidate is project management. Monday.com, Asana, etc. can be replaced if a team is willing to do the work to define their ideal workflow and build a small, custom, internal solution to match. That’s real money back in the bank if done thoughtfully.

Lastly, a human-centric underpinning to this conversation is that our core skills are often what bring us joy and satisfaction through our work. That’s true for me in writing and strategy. I might use an AI tool for a quick edit, but I’m committed to making my brain continue to do the work of processing and expressing big ideas.

Here’s an invitation to join me. The next time you feel like you’re about to be replaced by AI, just choose not to be. Use it to outsource the drudgery and make yourself more time-effective, but don’t feel the need to stop practicing and improving the core skills that make you enjoy your role and career. And at an organizational level, let’s be sure we’re preserving the core functions that drive our success and not just averaging them out to save a quick buck.